MIDUS: Memory-Infused Depth Up-Scaling PDF

Published in Under Review, 2025

Taero Kim, Hoyoon Byun, Youngjun Choi, Sungrae Park, Kyungwoo Song

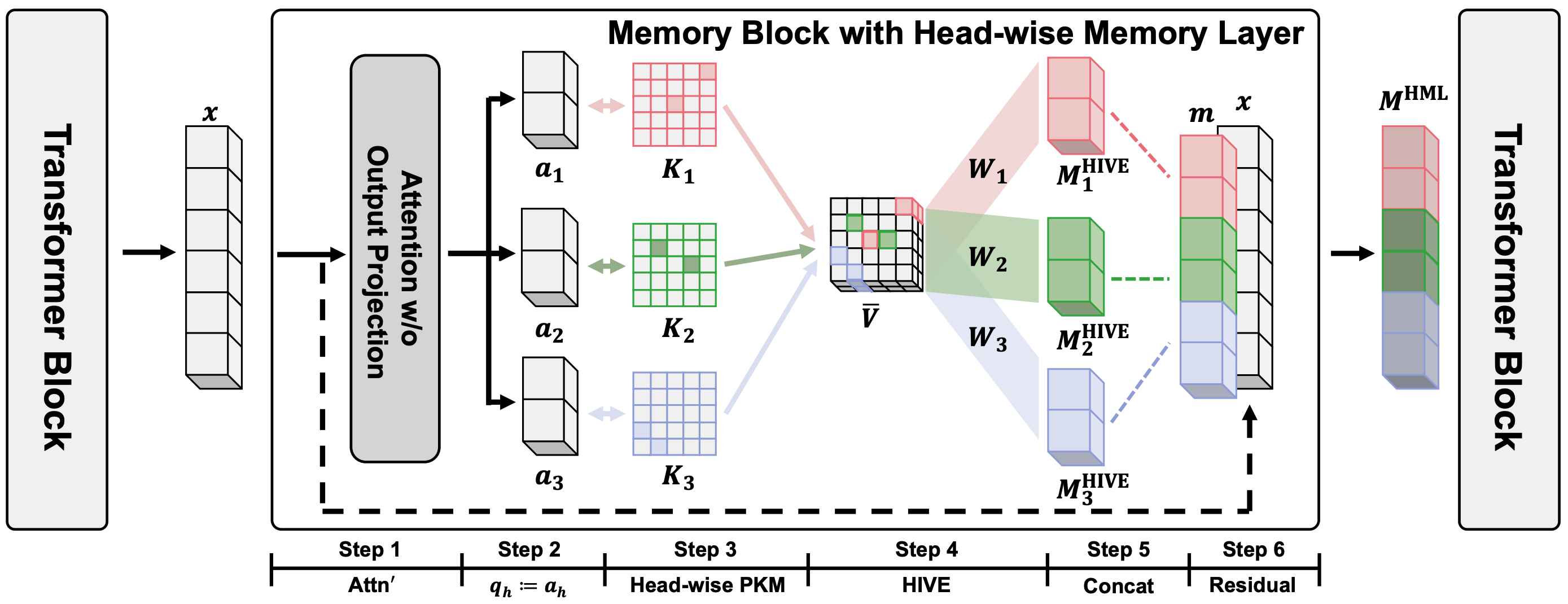

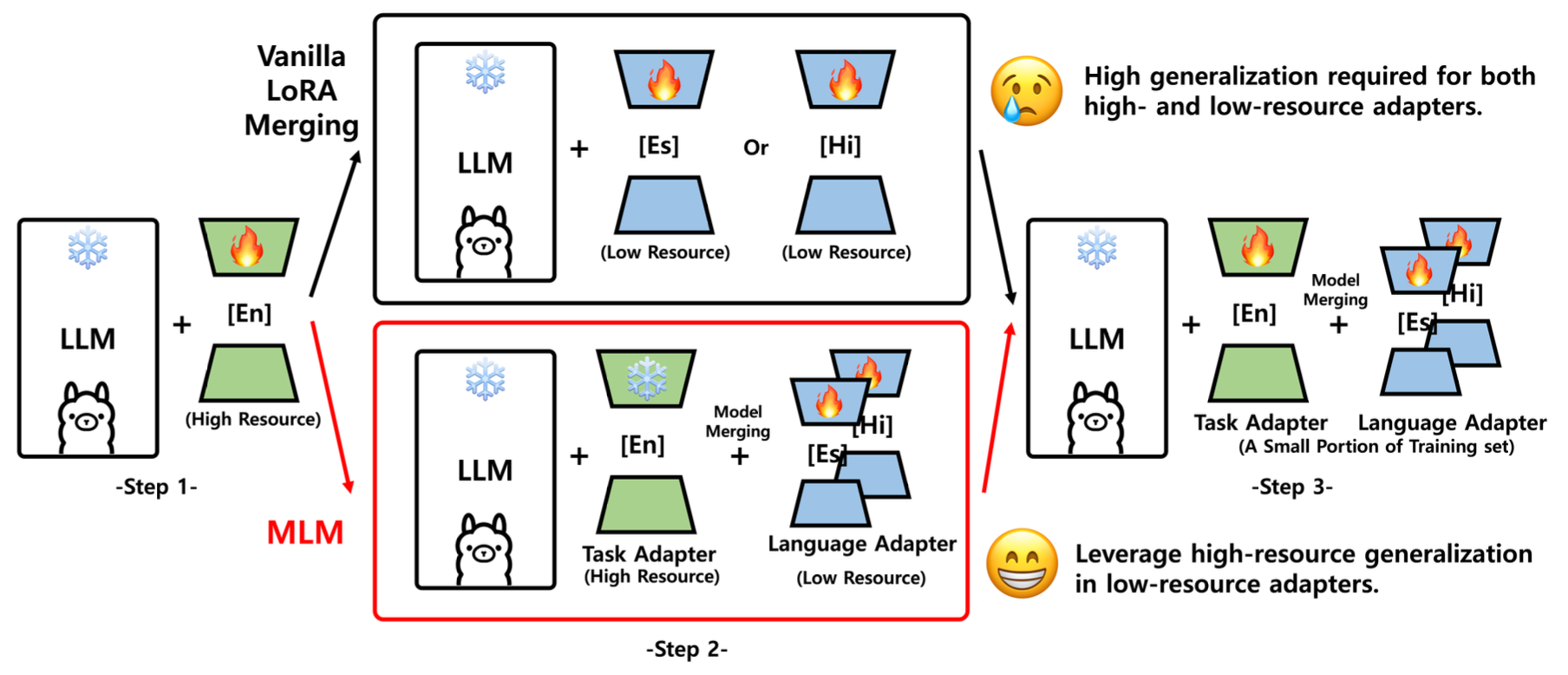

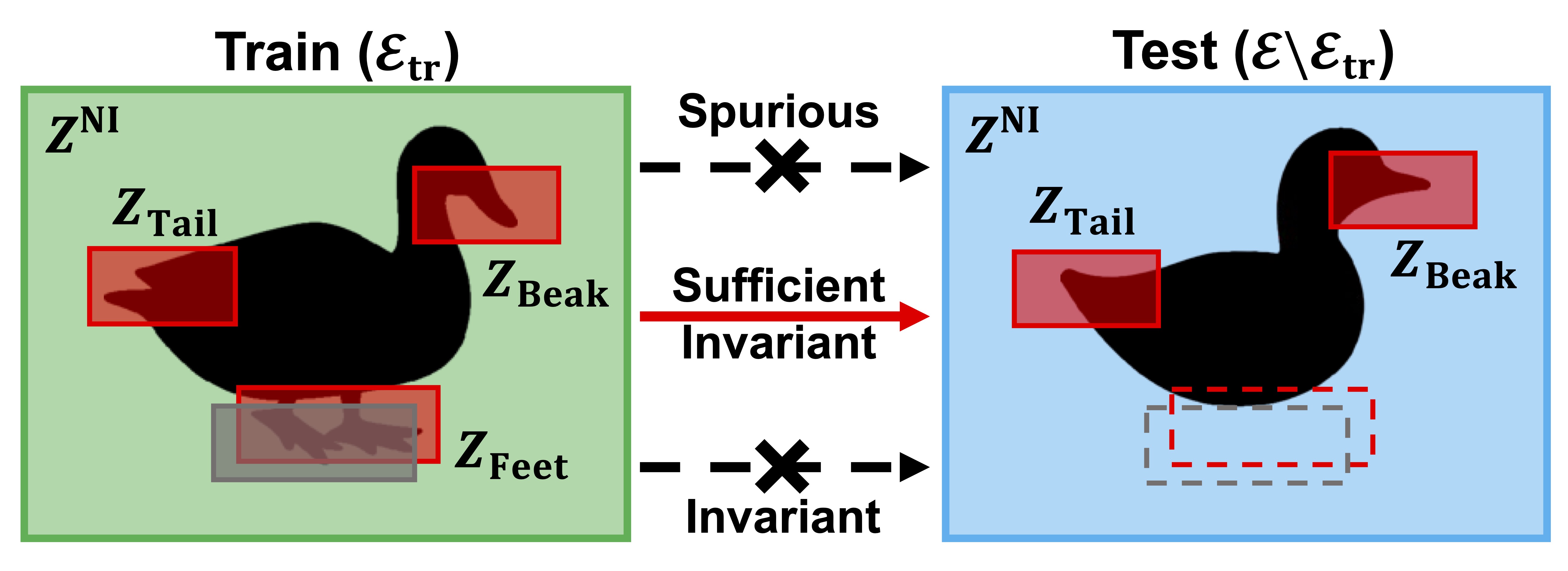

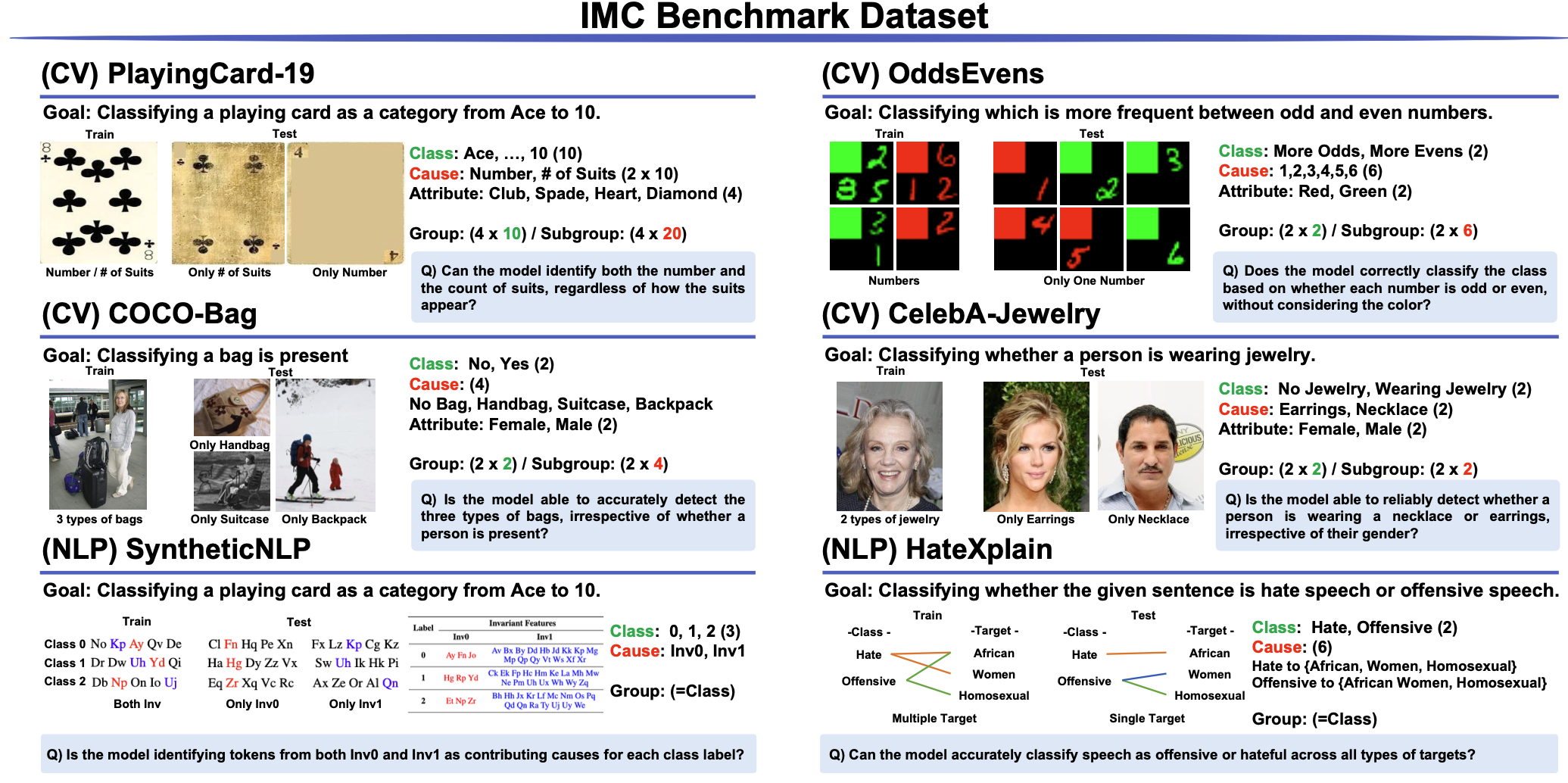

We propose MIDUS, a depth up-scaling method for large language models that infuses memory mechanisms into newly inserted layers. MIDUS enables efficient model depth expansion while preserving pretrained knowledge and improving parameter utilization.